On Monday, the website Futurism reported that Sports Illustrated has been publishing AI-written stories under the bylines of journalists who do not, strictly speaking, "exist." The site had created bios for these imaginary authors; the author photos, naturally, were AI-generated. If you have ever encountered AI-generated copy or art, which you almost certainly have, you do not need me to tell you that this was all uncanny, deeply dispiriting junk. That is all that AI-generated writing has proven itself capable of being to this point, and the posts highlighted by Futurism, which are chipper and circular and obvious when they are not suddenly extremely weird, are that sort of post. They are designed not to answer questions—again, because it is fed on and built from online's recycled idiocies, AI cannot do this reliably—so much as they are to snag passing search-engine traffic.

A recognizable and respected site like Sports Illustrated, just given that Sports Illustrated was for decades the apex of American sports writing, would be very useful for this sort of gambit, but posts like this are useless both by design and by default. Someone looking for information on volleyball, say, might naturally turn to Sports Illustrated when their query turns up a link to that site. They would be rewarded by a story bylined by "Drew Ortiz," who is not a real person. ("Drew has spent much of his life outdoors," his bio read, "and is excited to guide you through his never-ending list of the best products to keep you from falling to the perils of nature.") In that story, Drew allowed that volleyball "can be a little tricky to get into, especially without an actual ball to practice with." The reader would get nothing from having read this beyond the faint revulsion and sadness that comes with encountering this kind of uncanny prose in the wild, as well as the sense that Sports Illustrated has some weird spammy shit on it now; the publisher would get some money from clicked affiliate links and fractions of a cent from subjecting visitors to the ads they saw on that page before they closed the tab. A publisher that cared about a publication even a little bit would not put something like this up on their site. A publisher that didn't would care more about the second part, the part about the money, and do it anyway.

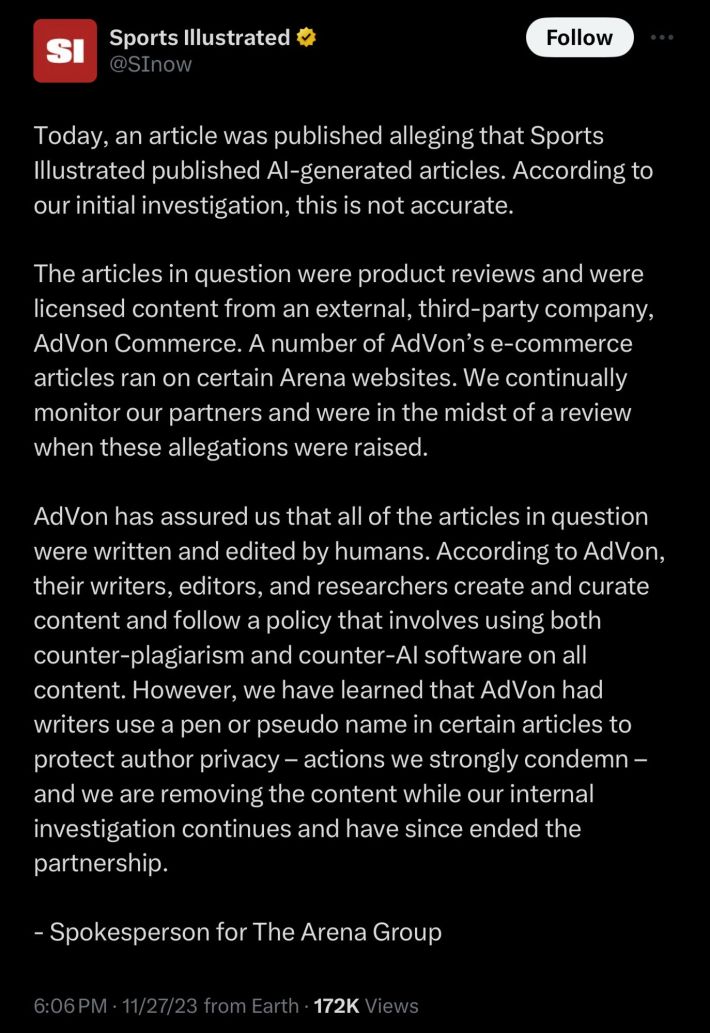

When Futurism's Maggie Harrison asked Sports Illustrated's publisher, The Arena Group, about the stories, the publisher deleted the posts instead of replying. On Monday evening, The Arena Group released a statement blaming "an external, third-party company" whose "e-commerce articles ran on certain Arena websites," while insisting that all these terrible, AI-scented posts had in fact been written by actual humans, albeit under "pseudo names."

"It sounds like The Arena Group's investigation pretty much just involved asking AdVon whether the content was AI-generated, and taking them at their word when they said it wasn't," Harrison wrote. "The implication seems to be that AdVon invented fake writers, assigned them fake biographies and AI-generated headshots, and then stopped right there, only publishing content written by old-fashioned humans."

It's worth noting that this was all part of the plan for the site back in 2019, which is when a media concern called TheMaven, which rebranded to The Arena Group in 2021 and has licensed Sports Illustrated from a ghoulish rentier concern called Authentic Brands Group since 2020, took control. At the time, the idea of AI-written content was beyond even the wildest fantasies of such small-time chumbox jockeys. They took a more familiar route, laying off half of Sports Illustrated's newsroom and standing up a series of team-specific content mills seemingly modeled on the old SB Nation model. Those sites were to be run by independent contractors, which Maven execs referred to as "partners"; as we reported at Deadspin, those partners would be paid salaries around $25,000, through LLCs, with the possibility to make more through traffic bonuses. But, Maven COO Bill Sornsin told prospective partners, there was another way that they could boost their pay—by participating in something called "contributor flow," with a TheMaven-owned site called HubPages, which Sornsin described as "huge, in the 50 million monthly unique range." He went on:

And their model is a contributor model where anyone can submit a story and they have a small team of editors and SEO experts that will run your story through some automation, make some human edits, and they’ll put it out on one of their sites and if it picks up significant traffic from Google, you’ll actually get paid... People make real money on HubPages. They have this technology and protocol that make stories absolute gold to Google on SEO.

This model was something of an obsession for TheMaven; the company's CEO, Ross Levinsohn, had planned to try the gambit, which he sometimes called "gravitas with scale," at The Los Angeles Times in 2017 before he wound up on administrative leave following sexual harassment allegations. Of course, the chowderheads in charge of The Arena Group are not in the same universe of prominence as the Silicon Valley billionaires obsessing over AI, or the Hollywood studio heads who started and lost a pair of high-profile labor disputes over it. The Arena Group are small fry; Spanfeller-grade goons, un-respected and un-respectable, whose careers amount to gigging some algorithms in the most oafish possible ways.

But their fixation on AI is effectively the same as their big-time counterparts, and serves the same end—to make and sell something that only people can make in a useful way, but without the people. The Arena Group knows that this stuff sucks and is useless; they would defend it if they didn't. Their bet, in the case of these shabby blogs but also more broadly, is that none of that really matters.

Whether a person or a program wrote the posts that ran under Drew Ortiz's byline does not matter, either, really; the quality of the product is the same, and perfectly reflects the disregard that The Arena Group has for both its readers and the people who have somehow continued to do world-class work at Sports Illustrated even as its owners strive to replace them with Drew Ortiz and "Sora Tanaka" and their opaque product reviews. "SI used my name and face in an email to sell subscriptions last week," Sports Illustrated's Emma Baccellieri posted on Monday evening. "To think that they were simply making up names and faces while making this kind of sales pitch in the name of 'independent journalism' from 'the most trusted name in sports' is beyond infuriating." It is, but also it fits.

If you are someone who gets excited about stupid things, this is a very exciting time. It is notably less exciting if you prefer a bit more substance in your tech industry fads, but there's still some morbid interest in watching the easily excitable billionaires that sulk and bluster atop their industry repeatedly fall for their own windy sales pitches. That has happened frequently enough, and recently enough, and about supposedly world-altering technologies that were so instantly obvious as janky, unwanted, and deeply dire, that it's all very easy to dismiss in this case. This would be a mistake, for a few reasons.

The first is that, for all the promise that artificial intelligence may yet have in various applications, the one that currently has Silicon Valley types toggling between strategic sales-oriented meta-freakouts and the real thing remains preposterously wack. It is the sort of "large language model" program that you can find giving discursive, confident, frequently wrong answers to simple questions through technologies like OpenAI's ChatGPT; Alex Pareene aptly described these as "paraphrasing machines ... built for book reports, not books." The very rich people who want this technology to be the future, so that it can make them richer there, are also worried about someday being enslaved or vaporized by it. "The biggest camps can be described thusly," John Hermann writes in New York. "AI is going to be huge, therefore we should develop it as quickly and fully as possible to realize a glorious future; AI is going to be huge, therefore we should be very careful so as not to realize a horrifying future; AI is going to be huge, therefore we need to invest so we can make lots of money and beat everyone else who is trying, and the rest will take care of itself."

The very real possibility that this technology, which currently lands somewhere around Clippy From Microsoft Word But Sometimes Racist, might top out around "making online customer service experiences somewhat neater" just doesn't rate with this community. It's the wrong size. These are builders, seers, the rightful authors of the acceleration that will make humanity's future; their understanding of themselves and their relationship to everything else is both grandiose and abstracted enough that they can only imagine their innovations as ushering in either utopia or apocalypse, with the rest of humanity mostly just dealing with it. "If you’re wondering why people are treating OpenAI’s slapstick self-injury like the biggest story on the planet," Hermann writes, "it’s because lots of people close to it believe, or need to believe, that it is."

This is partially because all of these people are just absolutely rammed to the max on prescription stimulants and bored in the fussy ways that very rich people get bored. But this is also how these people talk when they are trying to sell something, which can be confusing. More to the point, this is just how they talk now—in these sweeping tones of civilizational significance, in this framework in which the future of humanity depends mostly on the masses getting out of these enterprising men's way and providing prompt service when called upon to do so. One thing that I try to remember, in considering all this, is that these people also talked like this about The Metaverse, the ostensible promise of which was that it would someday allow your legless avatar to attend work meetings inside your computer. Still, these donkeys have a lot of money and a lot of power, and while that has not enabled them to brute-force any of their dopey imagineerings into anything that any normal person has to deal with or care about, it does mean that their self-administered nervous breakdowns over this shit make the news. It also means that the residual toxins in these ideas tend to show up further downstream, where the rest of us live, and work.

These powerful people and their weird gilded toys and what they don't care about have become everyone's problem. In the most literal sense, all the noise that these rich, reckless bunglers create makes it harder to know things. Their mess shits up the search engines; their AI spins stupid new lies to life by haplessly plagiarizing and re-plagiarizing itself, eating its own excretions until it is as cocksure, incoherent, and wrong as its apostles themselves.

The assurance from Sports Illustrated's brass that what Futurism flagged as AI-generated content was in fact the product of 100 percent all-natural free-range human spammers is not only not reassuring, but just a restatement of the wild insult and threat running through all of this. It doesn't matter if they are lying, or selling, or in earnest. They are in the business of selling noise, and they are selling a lot of it. What sounds like a metaphor—the wailing and yammering and relentless showboating salesmanship of the rich making every other sound indistinct or inaudible—is in point of fact just a description.